The EU AI Act is already changing how HR and people leaders evaluate software. Across Europe, teams are asking sharper questions about how AI is used in performance management, what counts as high-risk, and whether vendors can clearly explain where human judgment begins and ends. Those questions are only becoming more important as the Act’s implementation continues through 2026 and beyond, with the European Commission still issuing additional guidance to help organizations apply the rules in practice.

For HR leaders, this is not just a legal issue. It is a governance issue, a trust issue, and in many cases a buying decision. If a platform uses AI in ways that affect employees, leaders need to know whether that AI is helping people work better or whether it is crossing into decision-making that creates added compliance and risk considerations under the Act.

At Betterworks, we believe that distinction matters. AI can play a valuable role in performance management when it helps people work with more clarity, consistency, and confidence. It should not replace human accountability for evaluating performance, making employment decisions, or determining outcomes that affect people’s careers.

A simple explanation of the EU AI Act

The EU AI Act is the European Union’s risk-based framework for AI. It does not treat every AI use case the same way. Instead, it classifies AI applications based on the level of risk they create, with stricter requirements for uses that can materially affect health, safety, or fundamental rights. The Act also has extra-territorial reach. Providers and deployers outside the EU can still fall within scope when the output of their AI system is used in the Union.

That matters for global HR technology providers and for multinational employers. A vendor does not need to be headquartered in Europe for the Act to become relevant. The practical question is whether AI outputs are being used in relation to employees or workers in the EU, and if so, for what purpose.

The headline for HR leaders is straightforward: the EU AI Act is not a blanket ban on AI in HR. But it does require a more careful look at how AI is designed, how it is used, and whether it materially influences decisions about people.

Where performance management tools come under scrutiny

The Act explicitly identifies certain employment-related uses of AI as high-risk. That includes AI intended to make decisions affecting work-related relationships, allocate tasks based on personal traits or behavior, or monitor and evaluate employee performance and behavior. In other words, employment contexts are not peripheral under the Act. They are one of the areas the law treats most seriously.

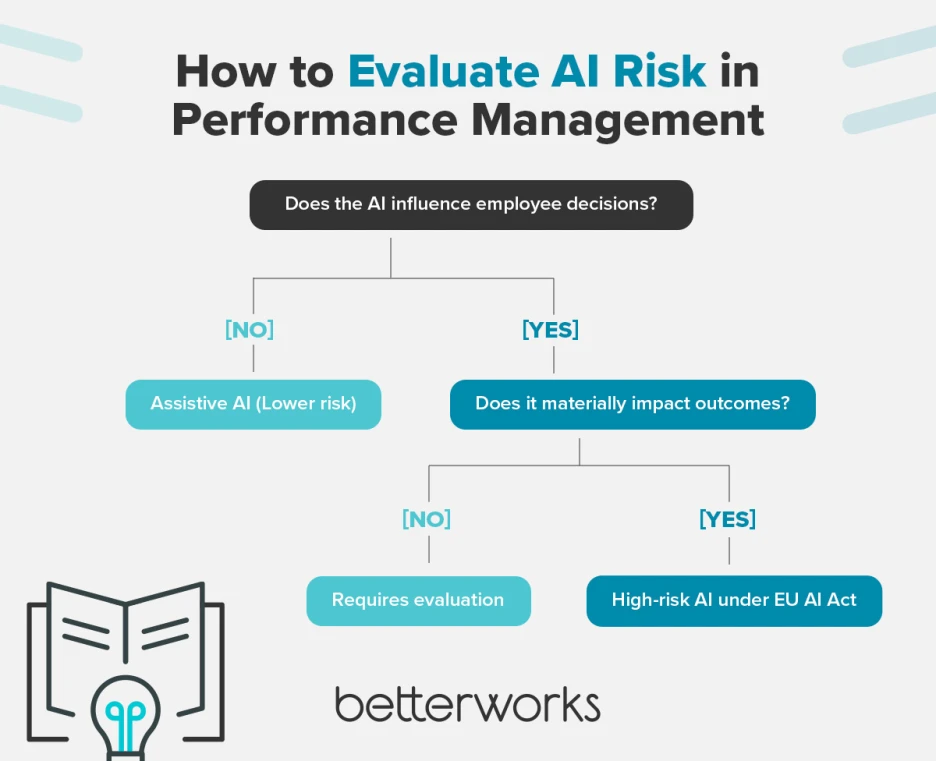

For HR leaders, this is where precision matters. Not every AI feature inside an HR platform will be treated the same way. A writing assistant that helps a manager improve clarity in feedback is not the same thing as a system that autonomously scores employees, infers promotion readiness, or determines who should be moved, rewarded, or filtered out. The words “AI in HR” can describe both scenarios, but from a governance perspective they are very different.

This is one reason the conversation around AI in performance management has become more nuanced. The key issue is not whether AI exists in the workflow. The key issue is what the AI is intended to do, how much influence it has, and whether meaningful human oversight remains in place.

The practical distinction that matters most: assistive AI vs. decision-making AI

This is the line HR leaders should focus on.

Under Article 6, the Act recognizes that some AI systems listed in Annex III may still not be treated as high-risk when they do not pose a significant risk of harm and do not materially influence the outcome of decision-making. The Commission has also said it will issue more practical guidance in 2026 on high-risk classification and related obligations, which underscores that implementation is still being clarified.

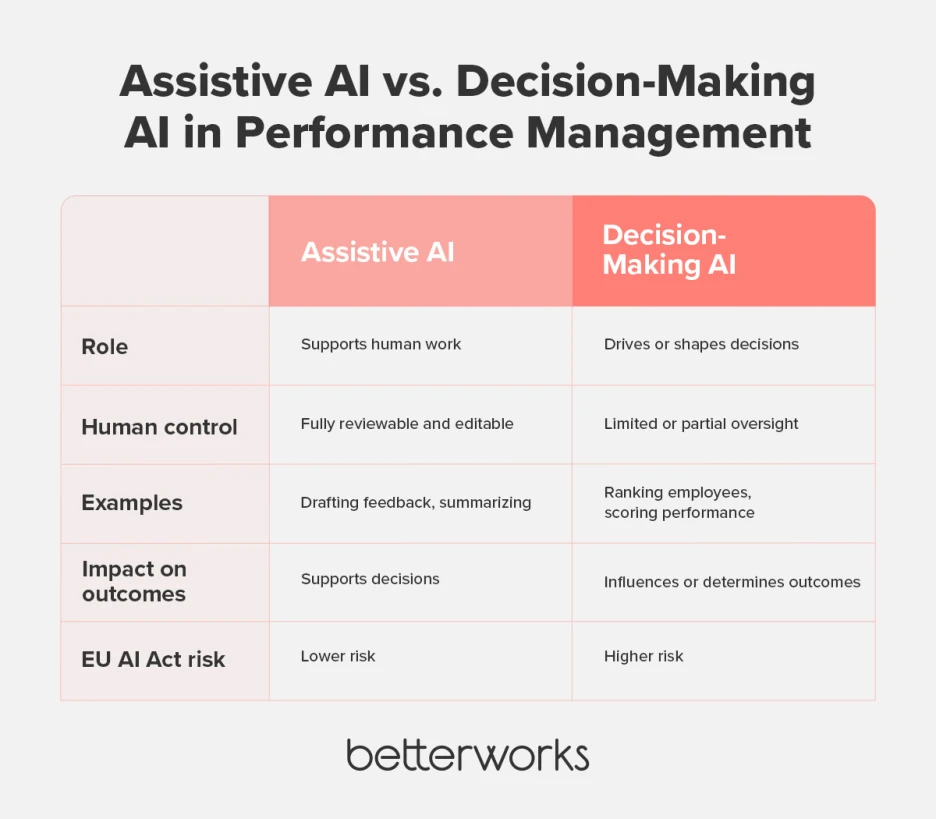

In practice, that means there is an important difference between assistive AI and decision-making AI.

Assistive AI helps people draft, summarize, prepare, or improve human-created work. It can support consistency, save time, and make it easier for managers and employees to communicate clearly. But the output remains reviewable, editable, and rejectable by a person who is responsible for the final judgment.

Decision-making AI goes further. It ranks, scores, infers, recommends, or determines outcomes in ways that materially shape what happens to an employee. That is the type of use case that draws much closer scrutiny under the Act, especially in employment settings.

For HR leaders evaluating vendors, this distinction is more useful than broad claims that a system is simply “AI-powered” or “AI-enabled.” The real question is whether the technology is acting as a co-pilot for human decision-makers or whether it is taking on a substantive role in making judgments about people.

How Betterworks approaches AI responsibly

At Betterworks, our approach is rooted in a simple principle: AI should support better performance conversations and better manager effectiveness, not replace human responsibility.

Betterworks describes AI as a co-pilot in performance management, designed to amplify human capabilities and support employees, managers, and HR leaders in working more effectively. Our broader AI materials also emphasize a human-centered approach in which AI supports rather than supplants decision-making, and recommendations can be accepted, modified, or rejected by the user.

That philosophy shows up in how current Betterworks AI features are designed and controlled.

Co-pilot, not autopilot. Betterworks AI is positioned as assistive. It helps users generate and refine goals, feedback, and conversations, and it can surface performance trends and focus areas more quickly. It is intended to support the workflow, not run the workflow on its own.

Human judgment remains pivotal. Betterworks’ own AI solutions materials state that human judgment remains central to decision-making and that final decisions remain in the hands of people. In product workflows, users are reminded to review AI-generated content, and they can accept, modify, or reject suggestions before anything is shared.

Transparent explanations matter. Betterworks AI features are designed to explain why a suggestion was made and what data was used. That kind of transparency helps support trust and gives users more context for evaluating the output rather than treating it as a black box.

Configuration matters. Betterworks gives administrators control over whether AI features are enabled at all, which individual features are enabled, and whether those features are made available for all employees or only for specific groups or departments. That flexibility matters for organizations that need to tailor rollout decisions by geography, function, or readiness level.

Customer data is not used to train the model. Betterworks’ support documentation states that employee data is not used to train or fine-tune its generative AI model, and that the AI environment is privately hosted. For HR leaders, that is an important part of the broader responsible AI conversation, especially in regulated or privacy-sensitive environments.

Taken together, that is why Betterworks’ current approach is best understood as assistive, human-led AI in performance management. The goal is to help managers and employees work with more clarity and consistency while keeping substantive evaluations and employment decisions with people, where they belong.

What this means for HR leaders evaluating vendors

The EU AI Act is one more reason to ask vendors better questions about how AI actually works inside the product. Marketing language alone is not enough. A responsible vendor should be able to clearly explain intended use, human oversight, controls, and safeguards.

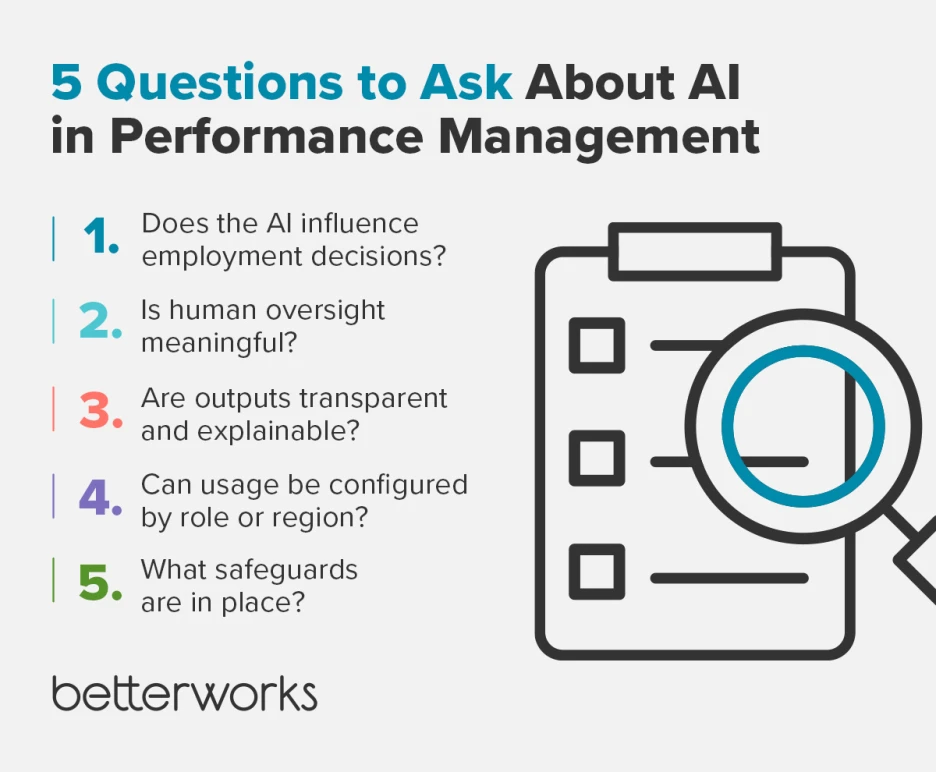

Here are five questions worth asking:

Does the AI make or materially influence employment decisions?

If a vendor’s AI is helping draft language or summarize information, that is one type of use. If it is ranking employees, inferring capability, or shaping outcomes around pay, promotion, or performance ratings, that is another. The difference matters.

Is human oversight real or cosmetic?

A human-in-the-loop claim only matters if users can genuinely review, challenge, modify, or reject outputs before decisions are made. Oversight has to be more than a checkbox.

Are outputs transparent and explainable?

Leaders should know what the system is doing, what data it relies on, and why it generated a given suggestion. That is important for governance, trust, and adoption.

Can access and use be configured?

Large organizations often need different controls for different populations, geographies, or roles. Feature-level and group-level controls can be an important safeguard.

Can the vendor clearly describe its safeguards?

A mature answer should cover intended use, human accountability, privacy protections, transparency, and how the vendor is monitoring evolving guidance. If that answer is vague, HR leaders should press further.

For enterprise HR teams, this is about more than compliance. It is about selecting technology that supports fair, transparent, and people-centered performance practices as AI becomes more common across the workplace.

Betterworks’ commitment going forward

AI regulation is evolving, and the EU AI Act will continue to become more operational as additional guidance is released. The European Commission has already said that further practical guidance is coming on high-risk classification, transparency requirements, high-risk obligations, and the interplay between the AI Act and other EU laws.

That is why Betterworks’ position is not based on hype or shortcuts. We believe AI should help managers and employees do better work, communicate more clearly, and make performance management more consistent and useful. We also believe that substantive evaluations and employment decisions should remain accountable to people.

As AI guidance continues to develop, Betterworks will continue to monitor the landscape and support a responsible, transparent, human-led approach to AI in performance management.

EU AI Act FAQs

Does the EU AI Act apply to HR software?

It can. The Act can apply to providers and deployers outside the EU when the output of the AI system is used in the Union, and employment-related use cases are specifically called out in Annex III. Whether a particular HR feature triggers stricter obligations depends on its intended use and level of risk.

Is AI in performance management considered high-risk?

Some uses can be. AI intended to make decisions affecting work relationships, allocate tasks based on behavior or traits, or monitor and evaluate employee performance is explicitly listed in the employment category of Annex III. At the same time, not every AI feature in a performance platform will necessarily be classified the same way, especially where the system is assistive and does not materially influence outcomes.

What is the difference between assistive AI and decision-making AI?

Assistive AI helps users draft, summarize, or improve work that a person still reviews and owns. Decision-making AI plays a substantive role in ranking, scoring, inferring, or determining outcomes about employees. That distinction is central to evaluating risk under the Act.

How does Betterworks use AI in performance management?

Betterworks uses AI to support workflows such as goal creation, writing assistance, summaries, and manager guidance. Betterworks’ product and support materials describe these capabilities as assistive, configurable, and human-centered, with users able to review, edit, and control outputs.

What should HR leaders ask vendors about EU AI Act readiness?

Start with intended use, human oversight, transparency, configuration controls, and data handling. The best vendors should be able to explain not only what their AI can do, but also what it is not designed to do and how safeguards are applied.

Is your performance management approach ready for AI regulation?

Book a Demo